Nowadays, data and information are considered the main and most valuable assets to possess. The majority of the companies tend to use different sets of technologies to evaluate markets more effectively. That being said, scraping methods of extracting information maintain their position among the most widely used. This article will cover professional web scraping with Java and the basics of scraper configuration.

Why Use Java for Web Scraping?

A mass of instruments made specifically for data harvesting put Java in a category of solid tools for this kind of task. This language can provide you with almost all the desired instruments for efficient and quality data harvesting. Popular libraries will enable you to use features like API for HTML Java URL scraping.

Another major benefit of Java, Python and some other languages lies in wide compatibility with operating systems. You will be able to migrate your project from one popular environment to another and stay within the boundaries used language. Plus Java may give you a strong performance in resourceful data collecting projects. Great performance can be achieved due to support of the multiple parallel operations. This also allows you to extract the data from more than one page at the time.

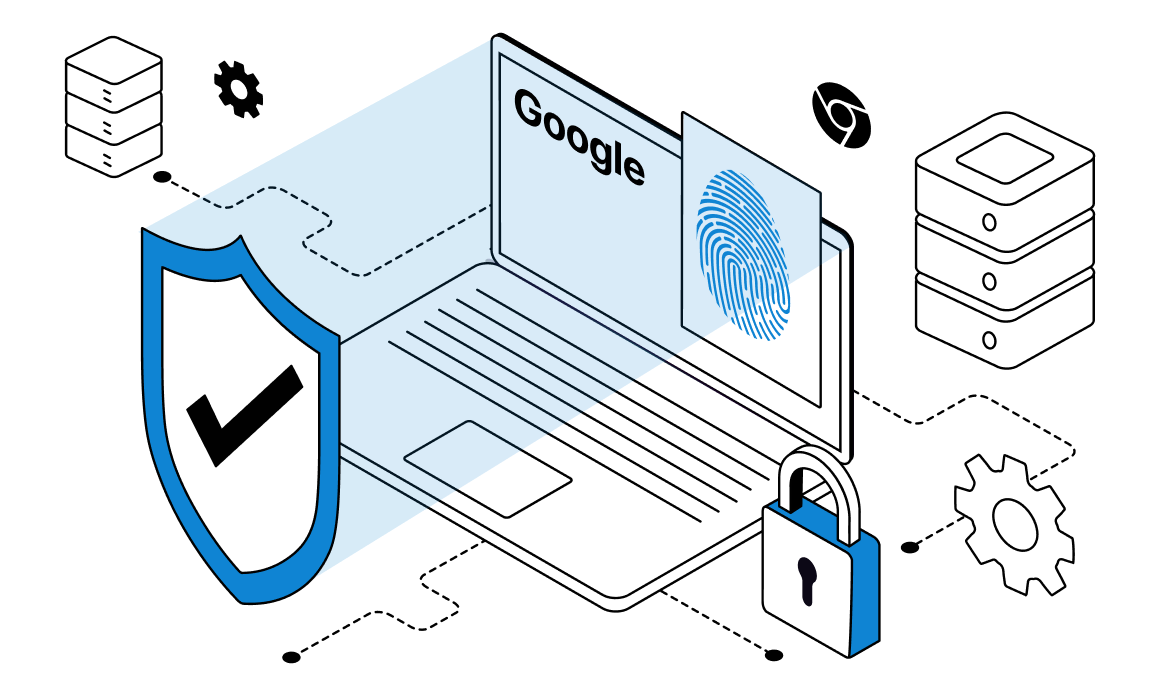

For newbies and even the amateurs, Java can provide a useful tool for additional correction of spelling and writing mistakes. This allows you to catch mistakes early and avoid unnecessary searches and evade problems in the beginning. Ultimately, this technology increases stability and overall quality of your project. Plus, scraping data from Java program projects can easily benefit from the use of proxy setups. You could think about using Java proxies in web scraping with a datacenter rotating proxy setup to power up any project that you have or planning to create.

Last but not least, Java has a large community of developers and enthusiasts. With ease, you can find an answer and all needed documentation for different use cases of Java. At some points among your projects, support from the community can give serious benefits and advancements.

Web Scraping Frameworks

Java has two most widely utilized tools called HtmlUnit and JSoup. HtmlUnit can provide every feature that can be helpful for work with a browser environment. This library will be a perfect solution for tasks that involve browser testing, web scraping etc. To perform collection of data, HtmlIUnit can turn off excess Javascript and other content to increase scraping performance.

The second tool that is usually utilized for working with HTML is called JSoup. This instrument can be a great solution for tasks that involve Java web scraping HTML path or any other tasks in the field of HTML code. Plus, you can consider using Java proxies in scraping with datacenter proxy to increase overall performance of your data collecting project.

Prerequisite for Building a Web Scraper With Java

Instructions within this text assume that you already have some experience using the Java programming language. Our guide will include use of Maven tools for package management.

Plus, you need to make use of basic knowledge in the field of page interactions with HTML code. Also, the knowledge about selector, XPath and API integrations will also be practical.

Quick Overview of CSS Selectors

Reviewing the CSS selector for our next steps before we start can be a useful practice. So check these parameters before further instructions.

#firstname – helps to select any part with id equals “firstname”

.blue – choses parts with “blue” in their class

p – chooses tags with <p> in them

div#firstname – chooses parts with div when id equals “firstname”

p.link.new – chooses <p class=”link new”>

p.link .new – chooses element with “new”, which are inside <p class=”link”>

Web Scraping With Java Using JSoup

JSoup is also seen as one of the main tools for Java URL scraping. This instruction will give you an opportunity to learn all the general presentation of web scraping tech in Java with this library.

First, open the IDE and create a project there. Choose the Maven as a system for building and give the project a headline. Now, your project should already have two files inside. With this, we can start adding libraries with Maven. Open the pom.xml file and make a part to tie it with JSoup. After this, we can carry on with our work on the scraper.

Locate the sections of data that you want to scrape from the page and choose these elements for further input. If you’d like to choose only the several varieties of elements, you can input them separately instead of collecting all at one time.

JSoup has a built-in function called connect. It can assist you in scraping URL Java based and return information in the file’s format. Moreover, you should remember that most modern pages and websites will reject requests without specific HTTP headers. To avoid any of these problems, you need to configure the headers manually before the scraping. User agent helps sites to identify what kind of device, OS and etc. trying to receive data.

Receiving and arranging the Java scraping HTML data object for needed elements is going to be the main obstacle and major part of the further job. You shall have to utilize methods like getElementByID or getElementsByTag. Access the website you intended to scrape data from and find the segments for further collecting. Choose them through the developer tools and inspect them in HTML code.

Now, utilize the first() line for receiving the parts of ArrayList. Also, try to use a text() method for collecting text from chosen fragments. Remember that JSoup has different functions like these two. In most other cases, you need to utilize the selection option.

Web Scraping With Java Using HtmlUnit

For Java, HtmlUnit will be an ultimate solution for numerous different tasks in browsers. In case of data harvesting, this feature can be helpful for reading information stored in URLs. To begin with this web scraping in Java tutorial, you need to repeat the first step from a previous guide.

Now you should make a new project with installed libraries. After this, carry out with importing all the HTML information from the desired page. HtmlUnit utilizes WebClient for receiving page data, so you also should male a new instance of the class.

After this, start querying HTML with methods like getElementById(). You also need XPath methods for receiving HTML elements. This class of the methods utilizes CSS for returning DomNode elements. Now use any suitable method for receiving and printing out the result in needed format.

Making Your Own Web Scraper

Knowing the fundamentals now, we can begin with our further scraping with Java tutorial. To make our own scarper, all the required tools must be updated to the latest versions and prepared for use.

Launch a fresh project, then locate the file with build.gradle name. In this file, you can install needed dependencies with HtmlUnit. Now go to the page you wish to scrape, and locate the elements you want to gather. Open developer tools window from the menu and find the needed HTML code for them.

At this point of web scraping tutorial Java based, to continue your project, you need to obtain the HTML code. Requests can be sent to the page and after this you will be able to collect received data in one document. Also add the import for HtmlUnit to return the information form of a page.

Now parse all the harvested data further to make it comfortable to read. Use methods like getAnchors and getTitleText for scraping and exporting information in CSV files. Use the FileWriter tool for developing new documents and exporting information in it.

In the end, you are going to have one structured file with all the data that we collected. Remember, that some of the harvesting tasks might require you to use a static residential proxy or other kind of advanced connection technique to make a successful project.

Conclusion

Modern companies today tend to utilize data harvesting for performing an analysis of a big variety of market metrics. The need for various tools for such work is constantly growing, and Java can provide some of them. This article provides you with web scraping using Java tutorial for most popular libraries.Java scraping web pages techniques is possible in projects of almost any scale and complexity. Variety of tools are able to offer you an opportunity to build and use your own data harvesting tool that will satisfy all of your demands perfectly. Also consider using Java proxies in web scraping with residential proxy setup. This allows you to choose any location from a rich pool of countries and sites for any one of your tasks.

Frequently Asked Questions

Please read our Documentation if you have questions that are not listed below.

-

What libraries can I use for scraping in Java?

The Java language has a lot of libraries designed specifically for web scraping. JSoup and HtmlUnit are two most popular choices that can cover most of your needs.

-

What proxies are the best for web scraping in Java?

You can use different types of proxies depending on your needs at the moment. Residential proxies are considered one of the most universal. With tools like this, you can change countries and access content blocked in your area.

-

Why do I need to use a proxy in scraping tasks?

Most of the modern sites have advanced systems to protect them from any scraping. But, proxy solutions and random user agents can help you to avoid being blocked while scraping.

Top 5 posts

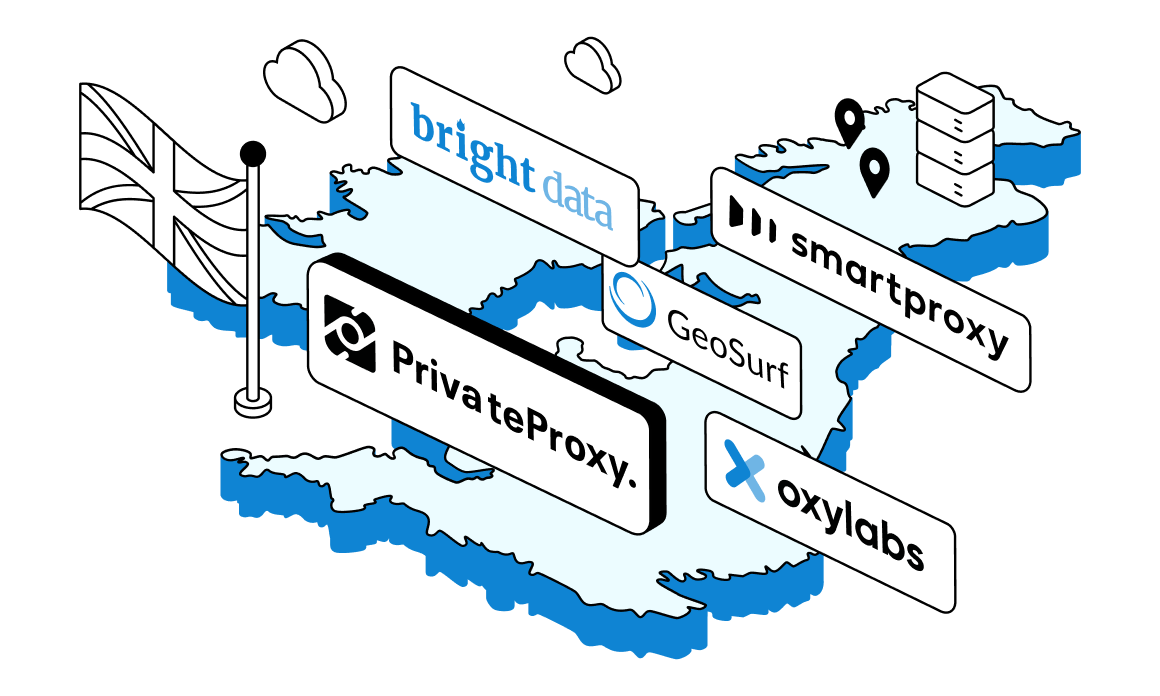

If you have a geo-specific scraping or data collection mission involving websites or services located in the United Kingdom, you will be better off with a set of reliable residential or datacenter proxies with UK IP addresses.

In this post we will cover the most promising and reputable UK proxy providers in the industry, talk about the use cases for such servers and dive into the specifics of using such servers.